Inventory contains a list of hostname or IP addresses and follows INI

format. In Ansible, we have static and dynamic inventory. Even

ad hoc actions performed on the localhost require an inventory, though that

inventory may just consist of the localhost. The inventory is the most

basic building block of Ansible architecture. When executing ansible or

ansible-playbook, an inventory must be referenced. Inventories are

either files or directories that exist on the same system that runs

ansible or ansible-playbook. The location of the inventory can be

referenced at runtime with the --inventory-file (-i) argument, or by

defining the path in an Ansible config file.

Dynamic Inventory

Copy the content of ec2.py and ec2.ini from github inventory scripts and

create new file on the controller using the same content.

[ansible@controller ~]$ ls -l

total 740

-rwxrwxrwx. 1 root root 148801 Sep 20 16:40 ec2.ini

-rwxrwxrwx. 1 root root 605527 Sep 20 16:40 ec2.py

#!/usr/bin/env python" to use python3 inside

ec2.py as when I downloaded the script it was using python as

environment variable while we are using python3

Next we try to execute the script but it complains of boto module.

[ansible@controller ~]$ ./ec2.py

Traceback (most recent call last):

File "./ec2.py", line 164, in <module>

import boto

ModuleNotFoundError: No module named 'boto'

Let us install the missing boto module using pip

[ansible@controller ~]$ sudo pip3 install boto

WARNING: Running pip install with root privileges is generally not a good idea. Try `pip3 install --user` instead.

Collecting boto

Downloading https://files.pythonhosted.org/packages/23/10/c0b78c27298029e4454a472a1919bde20cb182dab1662cec7f2ca1dcc523/boto-2.49.0-py2.py3-none-any.whl (1.4MB)

100% |████████████████████████████████| 1.4MB 915kB/s

Installing collected packages: boto

Successfully installed boto-2.49.0

Assign IAM role to the Ansible Engine server

Next if I try to re-execute the script we get the following error

boto.exception.NoAuthHandlerFound: No handler was ready to authenticate. 1 handlers were checked. ['HmacAuthV4Handler'] Check your credentials

This means we must assign an IAM role to the Ansible Engine.

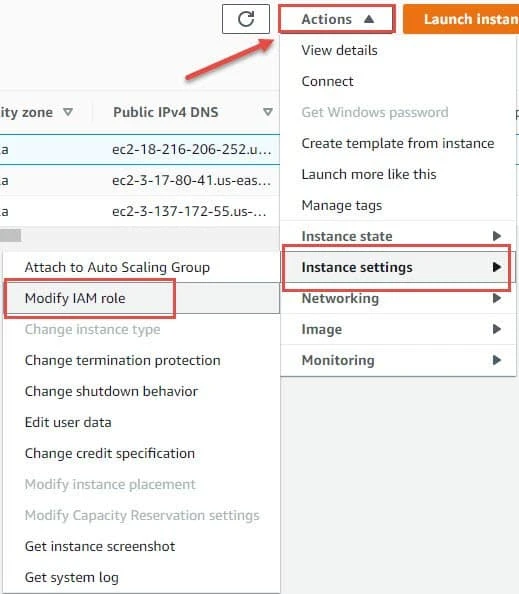

Select your ansible engine instance, click on Actions and from the drop down menu select "Instance Settings" → "Modify IAM role"

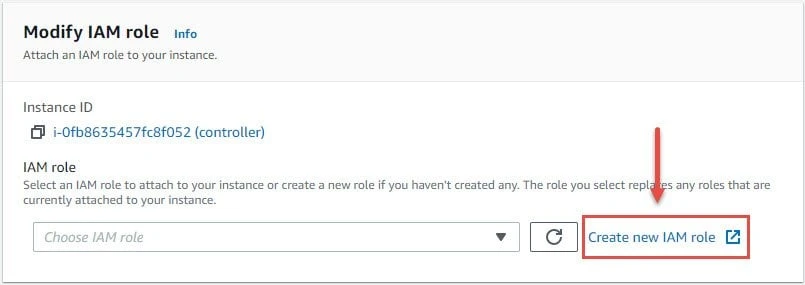

Since I don't have a role here I will create a new role. Click on "Create new IAM role" which will open a new terminal window

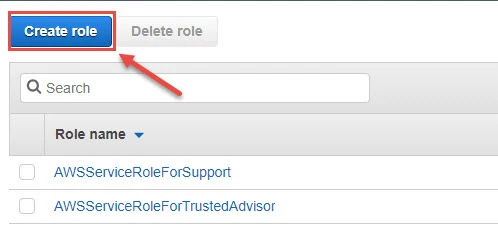

Click on "Create role"

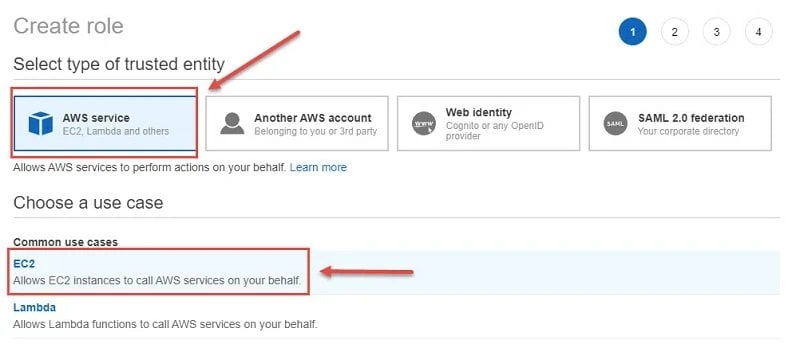

Select "AWS service" as your entity and then select EC2 as your use case. Click on "Next: Permissions"

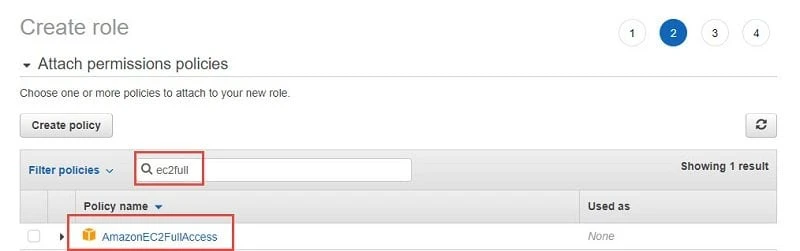

Search for "ec2full" in the search bar and select "AmazonEC2FullAccess". Click on "Next: Tags"

We will use the tags field for dynamic inventory later. For now let's leave this field empty and click on "Next: Review"

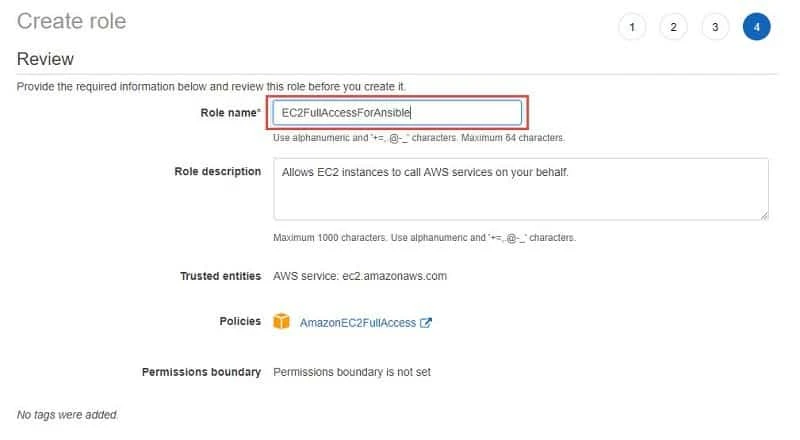

Assign a role name, we have given "EC2FullAccessForAnsible". Click on "Create Role"

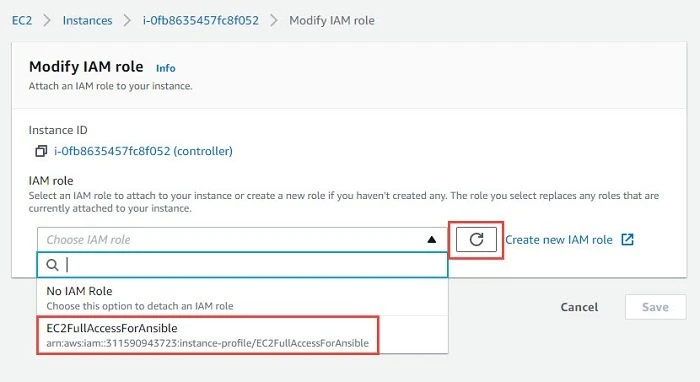

Once the role is created successfully. Come back to the terminal with "Modify IAM role", click on refresh button to refresh the changes which we just did. Now from the drop down you should see your newly created role.

Select the role and click on Save

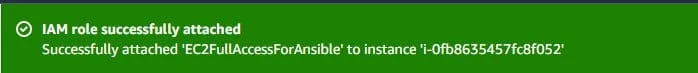

If all is good then you should see a message "IAM role successfully attached"

Next we try to run the script and again we get some error

[ansible@controller ~]$ ./ec2.py

ERROR: "Forbidden", while: getting ElastiCache clusters

I checked online and found a

github page where this

error was reported but a fix was not available at the time of writing

this tutorial so I went ahead and disabled elasticache in ec2.ini as

I anyhow don't need it for the demonstration.

[ansible@controller ~]$ grep ^elasticache ec2.ini

elasticache = False

Now let us re-run the script (fingers crossed)

[ansible@controller ~]$ ./ec2.py

{

"_meta": {

"hostvars": {

"18.216.206.252": {

"ansible_host": "18.216.206.252",

"ec2__in_monitoring_element": false,

"ec2_account_id": "311590943723",

"ec2_ami_launch_index": "2",

"ec2_architecture": "x86_64",

"ec2_block_devices": {

"sda1": "vol-04186fa5137af2ce7"

},

<output trimmed>

"us-east-2": [

"18.216.206.252",

"3.17.80.41",

"3.137.172.55"

],

"us-east-2a": [

"18.216.206.252",

"3.17.80.41",

"3.137.172.55"

],

"vpc_id_vpc_232f8148": [

"18.216.206.252",

"3.17.80.41",

"3.137.172.55"

]

}

Bingo, and now we got the list of hosts using our dynamic inventory.

Now there are different regions which are created here such as

us-east-2a, us-east-2 etc

We can find the list of our instances under "ec2" group in this list:

"ec2": [

"18.216.206.252",

"3.17.80.41",

"3.137.172.55"

],

Now we will use ansible to check the connectivity of ec2 group for

[ansible@controller ~]$ ansible -i ec2.py ec2 -m ping

[WARNING]: Invalid characters were found in group names but not replaced, use -vvvv to see details

18.216.206.252 | UNREACHABLE! => {

"changed": false,

"msg": "Failed to connect to the host via ssh: Warning: Permanently added '18.216.206.252' (ECDSA) to the list of known hosts.\r\[email protected]: Permission denied (publickey,gssapi-keyex,gssapi-with-mic,password).",

"unreachable": true

}

3.137.172.55 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

3.17.80.41 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

The ping was success for 2 servers but it failed for one of the servers

which is expected as I

have not copied the public key to my localhost on the controller.

Let us copy the public key to the localhost and re-verify the output

[ansible@controller ~]$ ssh-copy-id controller

Let's re-run the ping command for ec2 group:

[ansible@controller ~]$ ansible -i ec2.py ec2 -m ping

[WARNING]: Invalid characters were found in group names but not replaced, use -vvvv to see details

3.137.172.55 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

3.17.80.41 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

18.216.206.252 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

This time we get a "pong" response from all the servers part of ec2

group.

Creating new instance to verify dynamic inventory script

Now since we have a dynamic inventory script, it will fetch any new instance which we create on AWS. But ansible will fail to connect to the new instance if the SSH keys are not deployed so we have a dependency.

Now to avoid this dependency we must always create new instance using Image of any of the existing managed nodes. So your new instance will come up with all the configuration which is already part of your managed node including the SSH public key, ansible user, python tool.

To create an image of any of the manage nodes, select the instance (we will select server1) and then click on Actions → Image → Create Image

Give an image name, for this tutorial I have given "AnsibleManagedNodes" and click on "Create Image".

This process will take some time. You can check the status by selecting "Images" from the left TAB Menu and click on "AMIs" i.e. Amazon Machine Images

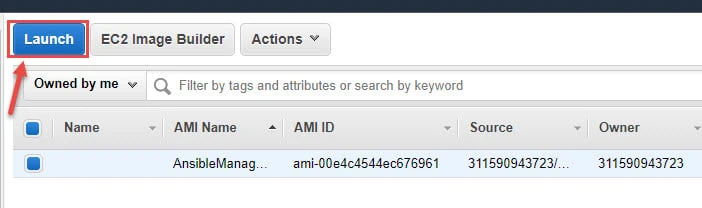

Once the state of AMI is "available", select the respective AMI and click on "Launch" to initiate the process of creating new instance using this image.

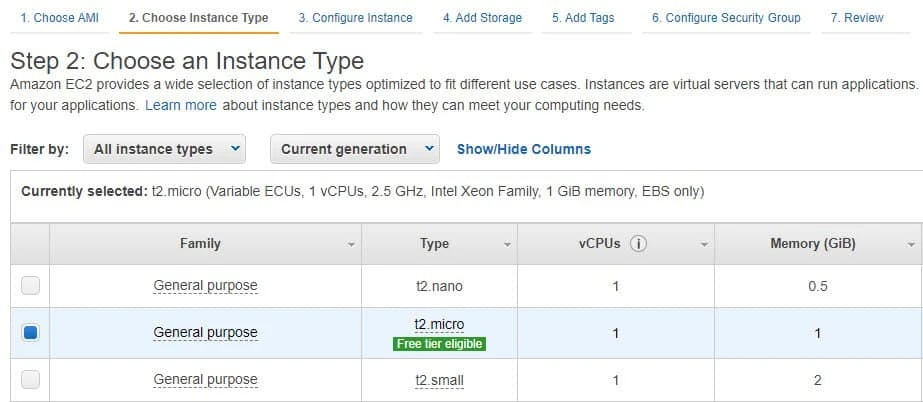

In the next terminal select the instance type based on your requirement then click on "Next: Configure Instance Details"

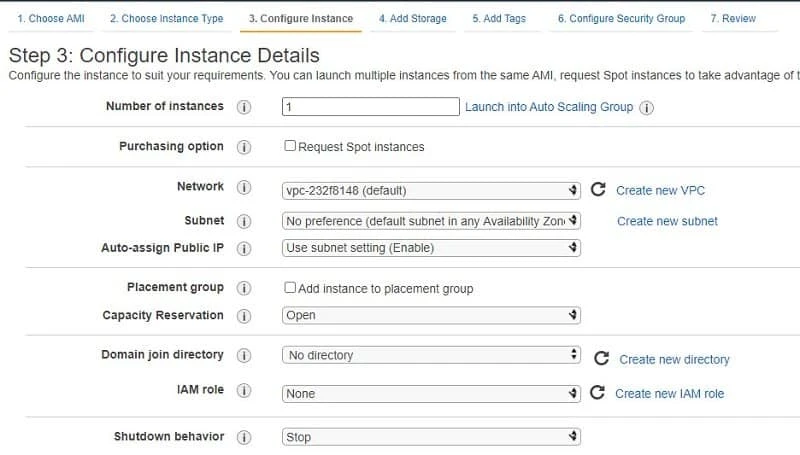

I will leave this section to default value and click on "Next: Add Storage".

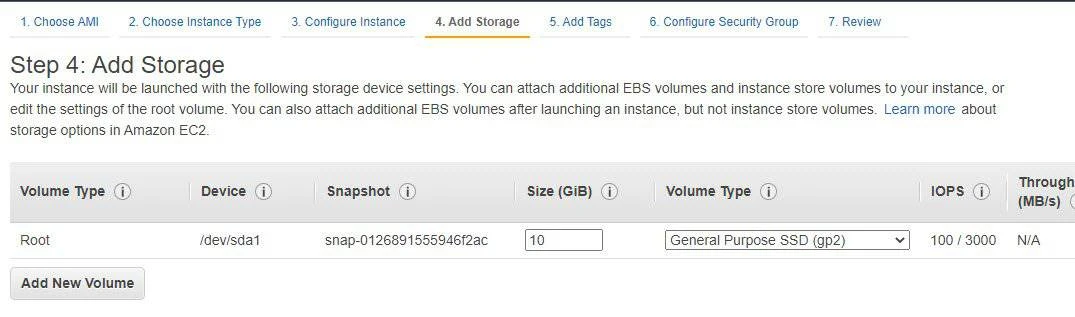

In this section you can choose the storage size. I will leave this to default as well and click on "Next: Add Tags"

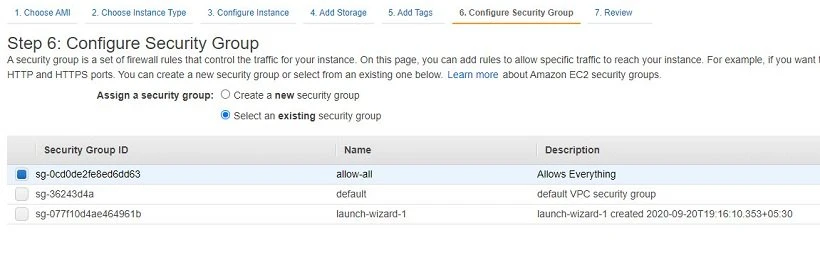

I don't want to add any tag details so click on "Next: Configure Security Group". In this section I will select the security group I created earlier to allow all the traffic and click on "Review and Launch"

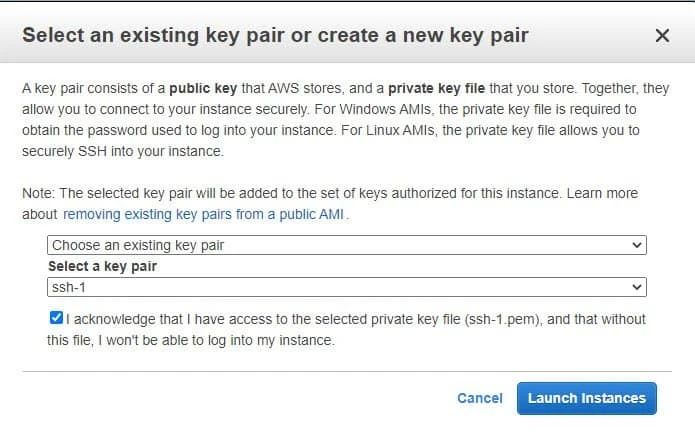

In the next session you will get the configuration which you have chosen for your instance. Click on "Launch" if everything looks correct. Next select your SSH key pair or you can create a new one. I will use my existing key pair.

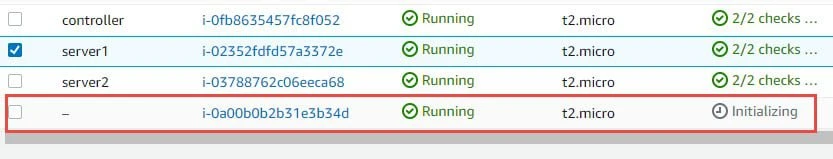

Accept the terms and condition and click on "Launch Instances". Next you can click on "view instances" to check the status. As you can see the new server is in "initializing" state and may take some time to come up.

We will add a name to this instance "server3". You can click on

"refresh" button to check the status of the new instance. Once it is

in "running" state we will connect to our controller and then

re-execute the ec2.py script to get the list of hosts using dynamic

inventory

[ansible@controller ~]$ ./ec2.py

Now look for the "ec2" group from the output:

"ec2": [

"3.17.178.77",

"18.216.206.252",

"3.17.80.41",

"3.137.172.55"

],

As you see now ec2 group has 4 servers where 3.137.172.55 is the IP

of 4th server which we just created. Next, we will

ping this server using ansible ad-hoc command to check if ansible is able to

communicate with this server

[ansible@controller ~]$ ansible -i ec2.py 3.137.172.55 -m ping

[WARNING]: Invalid characters were found in group names but not replaced, use -vvvv to see details

3.137.172.55 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

I have given the IP of the new server instead of entire ec2 group to

avoid a long output. But as you see we have received a "pong" response

from this server so the dynamic inventory is working as expected.

Create custom dynamic inventory script

We already have a dynamic inventory script from Ansible but what if we have a requirement to create our own script? So, let's create one dynamic inventory script for our environment.

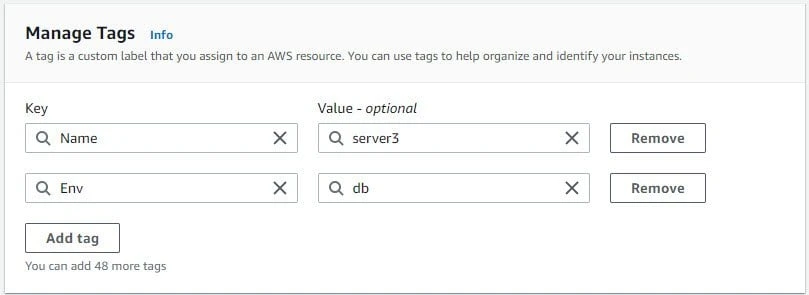

db" and "app" tags to my managed node's instances in

the AWS so that we can group them accordingly in our inventory. To

add/modify Tag you can select the instance and click on Action →

Manage Tags and modify the Tag value.

Sample screenshot of "Manage tags" from one of my instances.

Similarly you can add Key as Env and Value as "db"

Currently I have added db tag to two nodes and app tag to one node.

If we execute the ec2.py script, we should get this information:

[ansible@controller ~]$ ./ec2.py

<output trimmed>

"tag_Name_app": [

"18.220.88.40"

],

"tag_Name_controller": [

"3.137.186.147"

],

"tag_Name_db": [

"52.15.35.213",

"3.133.110.138"

],

<output trimmed>

So I have captured the output which only contains the details from my

EC2 instances. Here I have 3 tags available, app, db and

controller.

We will use a script which will collect the public IP Address value of all the instances based on the tag value.

#!/usr/bin/env python3

import sys

import json

try:

import boto3

except Exception as e:

print(e)

print("Please rectify above exception and then try again")

sys.exit(1)

def get_hosts(ec2_ob,fv):

f={"Name":"tag:Env" , "Values": [fv]}

hosts=[]

for each_in in ec2_ob.instances.filter(Filters=[f]):

hosts.append(each_in.public_ip_address)

return hosts

def main():

ec2_ob=boto3.resource("ec2","us-east-2")

db_group=get_hosts(ec2_ob,'db')

app_group=get_hosts(ec2_ob,'app')

all_groups={'db': db_group, 'app': app_group }

print(json.dumps(all_groups))

return None

if __name__=="__main__":

main()

Here the script:

- expects

boto3module to be installed on thecontroller. You can install it usingpip3 install boto3 - Search for all the instances under

us-east-2region withec2group. - We created two groups i.e.

db_groupandapp_groupwhich will fetch the hosts usingget_hostsfunction - Inside

get_hostswe have created a filter forNameandValues - Name will contain the

Keyi.e.EnvandValueis eitherdborapp - For each instance in

ec2_ob.instancesbased on our filter we print the public IP address of the instance - We store the list of servers in "

hosts" list and append each instance to this list all_groupswill store thedb_groupandapp_groupoutput and map it to respective tag- Using

json.dumpswe will convert the output into JSON format

Assign executable permission to the script

[ansible@controller custom_scripts]$ chmod u+x aws_ec2_custom.py

Now we will execute our script and check if it is able to fetch the list of public address from ec2 group:

[ansible@controller custom_scripts]$ ansible -i aws_ec2_custom.py all --list-hosts

hosts (3):

52.15.35.213

3.133.110.138

18.220.88.40

So we get the list of app and db servers, we can also print servers

from individual groups:

[ansible@controller custom_scripts]$ ansible -i aws_ec2_custom.py db --list-hosts

hosts (2):

52.15.35.213

3.133.110.138

[ansible@controller custom_scripts]$ ansible -i aws_ec2_custom.py app --list-hosts

hosts (1):

18.220.88.40

We can use ad-hoc command to ping all the servers using our dynamic inventory script:

[ansible@controller custom_scripts]$ ansible -i aws_ec2_custom.py all -m ping

52.15.35.213 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

3.133.110.138 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

18.220.88.40 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/libexec/platform-python"

},

"changed": false,

"ping": "pong"

}

Static Inventory

Before we go into detail, let’s look at a basic inventory file:

[ansible@controller ~]$ cat /etc/ansible/hosts

server1

server2

server3

localhost

- In this example I have defined 3 managed hosts and localhost which

means the

controllernode will also act as a client node. - If you want to run your Ansible tasks against all of these hosts, then you can pass all to the hosts parameter while running the ansible-playbook or to the ansible command; this will make

- Ansible run its tasks against all the hosts listed in an inventory file.

- One of the drawbacks with this type of simple inventory file is that you cannot run your Ansible tasks against a subset of the hosts, that is, if you want to run Ansible against two of the hosts, then you can't do that with this inventory file.

Provide hosts as an input argument

If you do not wish to execute ansible on all the hosts from the inventory file then you can manually provide the hostname or IP address as an input argument to the ansible command or playbook

[ansible@controller ~]$ ansible server1:server2 -m ping

Here the ansible command will be executed only on server1 and

server2. Now this is definitely one option to isolate the list of

hosts but the drawback of this method is if you have 100s of hosts then

it is not a good idea to provide so many hosts as an input.

Groups in an inventory file

In the following example we have grouped the inventory file into different sections:

[ansible@controller ~]$ cat /etc/ansible/hosts

[devops]

server1

server2

[db]

server3

server4

[app]

server5

server6

Now, instead of running Ansible against all the hosts, you can run it

against a set of hosts by passing the group name to the

ansible-playbook command. When Ansible runs its tasks against a group,

it will take all the hosts that fall under that group.

To run Ansible against all the hosts part of devops group, you need to

run the command line as shown below:

~]# ansible devops all -m ping

If you also want to specify the path of your inventory file then you can use

~]# ansible devops all -i /home/deepak/ansible/hosts -m ping

Groups of groups

- Grouping is a good way to run Ansible on multiple hosts together.

- Ansible provides a way to further group multiple groups together.

- You can have multiple groups in the inventory file and you can even club similar groups together in one group

- For example, let's say, you have multiple application and database

servers running in the east zone and these are grouped as

devopsanddb.

You can then create a master group called eastzone as shown below.

[devops]

server1

server2

[db]

server3

server4

[app]

server5

server6

[eastzone:children]

devops

db

Using this command, you can run Ansible on your entire eastzone data

centre instead of running it on all groups one by one.

[ansible@controller ~]$ ansible eastzone -m ping

Regular expressions with an inventory file

- An inventory file would be very helpful if you have many servers.

- Let's say you have a large number of web servers that follow the same

naming convention, for example,

server001,server002, …,server00N, and so on. - Listing all these servers separately will result in a dirty inventory file, which would be difficult to manage with hundreds to thousands of lines.

- To deal with such situations, Ansible allows you to use regex inside its inventory file.

[devops]

server[1:9]

[db]

server[10:19]

[app]

server[20:29]

[eastzone:children]

devops

db

server[1:9]will matchserver1,server2,server3, ...server9server[10:19]will matchserver10,server11,server12, ...server19server[20:29]will matchserver20,server21,server22, ...server29

Variables in inventory

We can also define different variables inside the inventory file. I have

added a new server3 instance to my list of managed nodes where I have

not configured password less

authentication and created a new user deepak on server3. I

will use user “deepak” from server3 to communicate using ansible

from the controller node

I have crated a custom inventory file under my home folder of ansible

user on controller node

[ansible@controller ~]$ cat myinventory

[passwordless]

server1

server2

[password]

server3

here I have divided my inventory into two groups where passwordless

group consists of 2 hosts where I have actually configured

password less authentication while server3 is configured to use password.

If I try to get whoami command information from all these hosts:

[ansible@controller ~]$ ansible -i myinventory all -m command -a "whoami"

server3 | UNREACHABLE! => {

"changed": false,

"msg": "Failed to connect to the host via ssh: ansible@server3: Permission denied (publickey,gssapi-keyex,gssapi-with-mic,password).",

"unreachable": true

}

server1 | CHANGED | rc=0 >>

ansible

server2 | CHANGED | rc=0 >>

ansible

The command has successfully executed on server1 and server2 but it

failed on server3 due to lack of password less communication.To

overcome this we can define variables inside the inventory file with

the username and password which ansible should use for connecting

with server3

Let me update the myinventory file with following information:

[passwordless]

server1

server2

[password]

server3 ansible_ssh_user=deepak ansible_ssh_pass=redhat

Here I have provided the username and password to login on server3 so

now ansible should communicate with server3 with these credentials

Let us execute the same command:

[ansible@controller ~]$ ansible -i myinventory all -m command -a "whoami"

server2 | CHANGED | rc=0 >>

ansible

server1 | CHANGED | rc=0 >>

ansible

server3 | CHANGED | rc=0 >>

deepak

So, the command was successful this time and you can see that for

server3 whoami has returned “deepak” instead of ansible user.

We can also define these variables using “vars” inside the inventory:

[passwordless]

server1

server2

[password]

server3

[password:vars]

ansible_ssh_user=deepak

ansible_ssh_pass=redhat

ansible would still be able to access the servers part of [password]

group using the credentials provided

However, there is a sequence of order in which these values are

considered. The variable assigned with the server itself takes the

highest precedence followed by the “vars” variable:

For example, I have defined my ansible_ssh_user as ansible next to the

server while using vars I am using deepak as the ssh_user.

[passwordless]

server1

server2

[password]

server3 ansible_ssh_user=ansible ansible_ssh_pass=redhat

[password:vars]

ansible_ssh_user=deepak

ansible_ssh_pass=redhat

Let us execute the ansible ad-hoc command:

[ansible@controller ~]$ ansible -i myinventory all -m command -a "whoami"

server1 | CHANGED | rc=0 >>

ansible

server2 | CHANGED | rc=0 >>

ansible

server3 | CHANGED | rc=0 >>

ansible

Here server3 was accessed using “ansible” user so the value we gave

with the server took higher precedence.We will learn more about

Ansible variables later in a different chapter while working with

playbooks.

What's Next

Next in our Ansible Tutorial we will learn work on Ansible managed nodes without Python.

![Ansible Tutorial for Beginners [RHCE EX294 Exam]](/ansible-tutorial/ansible_hu_b9bee27e02250c62.webp)