In this article I will share the steps to install multi node OpenStack on VirtualBox using CentOS 7 Linux. Packstack is used to perform multi node OpenStack deployment mostly for POC purpose as it does not give access to all the features of OpenStack. Even though we will install multi node OpenStack on VirtualBox, we will only have two node setup. Both these nodes are virtual machine with CentOS 7 running on Oracle VirtualBox on Windows 10. Ideally we can also configure OpenStack services to run on individual nodes rather than single node.

My Lab Environment

I have Oracle VirtualBox installed on my Linux Server and to install multi node OpenStack on Virtual Box I have created two virtual machine with CentOS 7 OS. The Virtual Box is installed on Windows 10. Below are the details of my virtual machine configuration.

| Component | Configuration | Details |

|---|---|---|

| HDD1 | 20GB | To install the OS. Recommended to configure LVM (on both nodes) |

| HDD2 | 10GB | To configure Cinder Volume. You must create LVM. (available only on controller node server1.example.com) |

| Memory | 8GB | On both nodes |

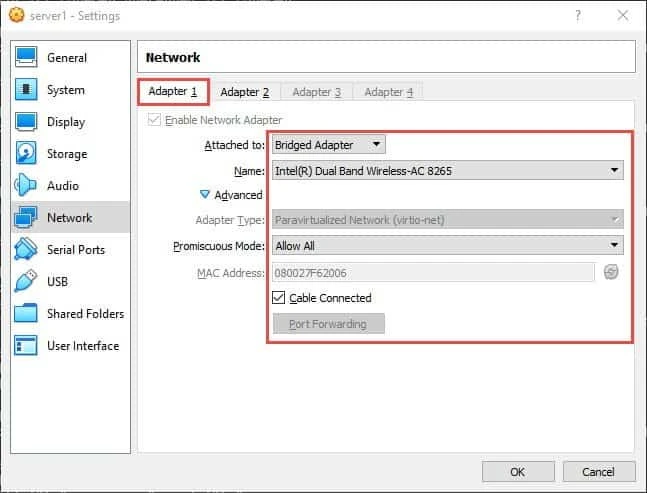

| NIC1 | Bridged Adapter | To connect to internet to download packages (on both nodes) |

| NIC2 | Internal Network | For internal networking between openstack nodes (on both nodes) |

| vCPU | 4 | on both nodes |

| OS | CentOS 7.6 | on both nodes |

| hostname | - | server1.example.com (controller) server2.example.com (compute) |

server1 will act as controller while server2 will be compute in

our OpenStack setup.

To configure cinder storage on our controller node I have already created a separate volume group cinder-volumes. This volume group will be used by packstack to create a Cinder storage.

[root@server1 ~]# vgs

VG #PV #LV #SN Attr VSize VFree

centos 1 2 0 wz--n- <19.00g 0

cinder-volumes 1 0 0 wz--n- <10.00g <10.00g

Here my OS is installed under OS volume group while cinder-volumes

will be used by Packstack later.

Configure Network to install multi node OpenStack on VirtualBox

Your Network Configuration is important to properly setup and install multi node OpenStack on VirtualBox. I am using two NIC Adapters as already informed in the Lab Environment section and my hosts are running with CentOS 7.

For the Internal Network Adapter you must have a inbuilt DHCP server available in Oracle VirtualBox. You can create an Internal Network with DHCP Server using below command on your Windows 10 Laptop

C:Program FilesOracleVirtualBox>VBoxManage dhcpserver add --netname mylab --ip 10.10.10.1 --netmask 255.255.255.0 --lowerip 10.10.10.2 --upperip 10.10.10.64 --enable

For more details check "Internal Network" section in Oracle Virtualbox Chapter

So below is my IP Address configuration.

[root@server1 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:f6:20:06 brd ff:ff:ff:ff:ff:ff

inet 192.168.0.120/24 brd 192.168.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fef6:2006/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:4b:7a:80 brd ff:ff:ff:ff:ff:ff

inet 10.10.10.2/24 brd 10.10.10.255 scope global dynamic eth1

valid_lft 1086sec preferred_lft 1086sec

inet6 fe80::a00:27ff:fe4b:7a80/64 scope link

valid_lft forever preferred_lft forever

Pre-requisites for OpenStack Deployment with Packstack

Before we start with the steps to install multi node OpenStack on VirtualBox using Packstack, there are certain mandatory pre-requisites which we must comply with.

Deploy OpenStack behind proxy (Optional)

This step if only for those users whose system requires a proxy server to connect to the Internet. I have already written another article with steps to configure proxy in Linux environment.

But to be able to install multi node OpenStack on VirtualBox using Packstack behind proxy, follow the below steps:

Create a file (if already not available) /etc/environment to set

default proxy settings. Add/append below content to this file:

http_proxy=http://user:[email protected]:proxy_port

https_proxy=https://user:[email protected]:proxy_port

no_proxy=localhost

127.0.0.1, yourdomain.com, your.ip.add.ress

You also need to include no_proxy because by default all traffic i.e.

directed to $proxy_port will go through the proxy. Now you don't want

to have your localhost traffic to go through the proxy. So if one

OpenStack node tries to communicate to other OpenStack node, you must

make sure even if $proxy_port is used, the traffic does not goes via

proxy.

Optionally you can also include these values in yum.conf to

connect your CentOS 7 server using proxy server.

Update your Linux host

It is always a good idea to update your Linux host before starting with OpenStack installation and/or configuration to make sure you have installed all the latest available patches and updates. You can update both of your CentOS 7 Linux system using

# yum update -y

You can also reboot your linux host once this step is complete as new kernel may have installed.

Update /etc/hosts

It is important that your system is able to resolve the hostname of your

localhost and compute node. To achieve this I can either configure a DNS

server or locally use /etc/hosts file

# echo "192.168.0.120 server1 server1.example.com" >> /etc/hosts

# echo "192.168.0.121 server2 server2.example.com" >> /etc/hosts

Modify the values as per your environment on both the nodes

Disable Consistent Network Device Naming

Red Hat Enterprise Linux provides methods for consistent and predictable network device naming for network interfaces. These features change the name of network interfaces on a system in order to make locating and differentiating the interfaces easier. For Openstack me must disable this feature as we would need to use the traditional naming convention i.e. ethXX

To disable consistent network device naming add

net.ifnames=0 biosdevname=0 to /etc/sysconfig/grub on

both the nodes.

[root@server1 ~]# grep GRUB_CMDLINE_LINUX /etc/sysconfig/grub

GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rd.lvm.lv=centos/swap rhgb quiet net.ifnames=0 biosdevname=0"

Next rebuild GRUB2

[root@server1 ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

Next reboot your linux system to activate the changes.

Disable NetworkManager

The current setup of OpenStack is not compatible with NetworkManager so we must disable NetworkManager on both the nodes. Below command will stop and disable NetworkManager service

[root@server1 ~]# systemctl disable NetworkManager --now

[root@server2 ~]# systemctl disable NetworkManager --now

Set up RDO repository to install Packstack utility

RDO (Red Hat Distribution of Openstack) is a community of people using and deploying OpenStack on CentOS, Fedora, and Red Hat Enterprise Linux. Packstack tool is by default not available in the CentOS repository. You must install RDO repository on both of your CentOS 7 Linux hosts

[root@server1 ~]# yum install -y https://rdoproject.org/repos/rdo-release.rpm

Next you can verify the list of available repositories

[root@server1 ~]# yum repolist

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.piconets.webwerks.in

* extras: mirrors.piconets.webwerks.in

* openstack-stein: mirrors.piconets.webwerks.in

* rdo-qemu-ev: mirrors.piconets.webwerks.in

* updates: mirrors.piconets.webwerks.in

repo id repo name status

!base/7/x86_64 CentOS-7 - Base 10,097

!extras/7/x86_64 CentOS-7 - Extras 305

!openstack-stein/x86_64 OpenStack Stein Repository 2,198

!rdo-qemu-ev/x86_64 RDO CentOS-7 - QEMU EV 83

!updates/7/x86_64 CentOS-7 - Updates 711

repolist: 13,394

Sync with NTP Servers

It is important that all your nodes of multi node OpenStack deployment are synced with NTP Server. When we install multi node OpenStack on VirtualBox with Packstack, it will also configure NTP Servers but it is a good idea to make sure the controller and compute nodes are synced with NTP Servers.

[root@server2 ~]# ntpdate -u pool.ntp.org

10 Nov 21:14:40 ntpdate[9857]: step time server 157.119.108.165 offset 278398.807924 sec

[root@server1 ~]# ntpdate -u pool.ntp.org

10 Nov 21:14:24 ntpdate[1978]: step time server 5.103.139.163 offset 2.537080 sec

Install Packstack

Packstack is a utility that uses puppet modules to deploy various parts of OpenStack on multiple pre-installed servers over SSH automatically. You can use yum to install packstack

[root@server1 ~]# yum -y install openstack-packstack

This should install Packstack utility. Next you can use

packstack --help to see the list of options which are supported with

Packstack to install multi node OpenStack on VirtualBox.

Generate Answer File

To install multi node OpenStack on VirtualBox using Packstack we have to configure multiple values. Now to make our life easy we have a concept of answer file with Packstack which contains all the variable required to configure and install multi node OpenStack on VirtualBox.

To generate the answer file template

[root@server1 ~]# packstack --gen-answer-file ~/answers.txt

Here I am creating my answer file under /root/ with the name

answers.txt. This answer file would contain default values to install

multi node OpenStack on VirtualBox and would capture certain details

from your CentOIS 7 localhost controller node to fill some values.

Modify answer file to configure OpenStack

Now the generated answer file contains default values for all the variables. You must modify them as per your requirement.

To install multi node OpenStack on VirtualBox using Packstack in my environment, I have modified below values

CONFIG_DEFAULT_PASSWORD=password

CONFIG_SWIFT_INSTALL=y

CONFIG_HEAT_INSTALL=y

CONFIG_NTP_SERVERS=pool.ntp.org

CONFIG_KEYSTONE_ADMIN_PW=password

CONFIG_CINDER_VOLUMES_SIZE=9G

CONFIG_CINDER_VOLUME_NAME=cinder-volumes

CONFIG_CINDER_VOLUMES_CREATE=y

CONFIG_NEUTRON_L2_AGENT=openvswitch

CONFIG_NEUTRON_OVS_BRIDGE_MAPPINGS=physnet1:br-eth1

CONFIG_NEUTRON_OVS_BRIDGE_IFACES=br-eth1:eth1

CONFIG_NEUTRON_OVS_TUNNEL_IF=eth1

CONFIG_HORIZON_SSL=y

CONFIG_HEAT_CFN_INSTALL=y

CONFIG_NEUTRON_ML2_TENANT_NETWORK_TYPES=vxlan

CONFIG_NEUTRON_ML2_TYPE_DRIVERS=vxlan

CONFIG_NEUTRON_ML2_MECHANISM_DRIVERS=openvswitch

CONFIG_NEUTRON_METERING_AGENT_INSTALL=n

CONFIG_PROVISION_DEMO=n

- For a basic Proof Of Concept on Openstack these values modification

should be sufficient. I have used a simple "

password" for Openstack and Keystone, you can choose to use a different password. - Since I have created Cinder Volume Group of 10GB during the time of OS

installation, I have provided

cinder-volumessize as 9GB. - My CentOS 7 system is

connected to internet

hence I am using

pool.ntp.orgto configure NTP server. - As you see I have one controller(192.168.0.120) and one compute(192.168.0.121) node

CONFIG_CONTROLLER_HOST=192.168.0.120

CONFIG_COMPUTE_HOSTS=192.168.0.121

CONFIG_NETWORK_HOSTS=192.168.0.120

CONFIG_STORAGE_HOST=192.168.0.120

CONFIG_SAHARA_HOST=192.168.0.120

CONFIG_AMQP_HOST=192.168.0.120

CONFIG_MARIADB_HOST=192.168.0.120

CONFIG_KEYSTONE_LDAP_URL=ldap://192.168.0.120

CONFIG_REDIS_HOST=192.168.0.120

- Here I am using "

openvswitch" as the NEUTRON backend which is why I am using OVS values to defined my Networking interfaces

CONFIG_NEUTRON_OVS_BRIDGE_MAPPINGS

CONFIG_NEUTRON_OVS_BRIDGE_IFACES

CONFIG_NEUTRON_OVS_TUNNEL_IF

- But if you plan to use OVN as the Neutron backend then you must use below variables instead of OVS

CONFIG_NEUTRON_OVN_BRIDGE_MAPPINGS

CONFIG_NEUTRON_OVN_BRIDGE_IFACES

CONFIG_NEUTRON_OVN_TUNNEL_IF

Install multi node Openstack on VirtualBox using Packstack

Our answer file is now ready for multi node OpenStack deployment. Next execute Packstack to deploy OpenStack on localhost CentOS 7 server

[root@server1 ~]# packstack --answer-file /root/answers.txt

Welcome to the Packstack setup utility

The installation log file is available at: /var/tmp/packstack/20191110-214111-UMIIGA/openstack-setup.log

Installing:

Clean Up [ DONE ]

Discovering ip protocol version [ DONE ]

[email protected]'s password:

[email protected]'s password:

Setting up ssh keys [ DONE ]

Preparing servers [ DONE ]

Pre installing Puppet and discovering hosts' details [ DONE ]

Preparing pre-install entries [ DONE ]

Installing time synchronization via NTP [ DONE ]

Setting up CACERT [ DONE ]

Preparing AMQP entries [ DONE ]

Preparing MariaDB entries [ DONE ]

Fixing Keystone LDAP config parameters to be undef if empty[ DONE ]

Preparing Keystone entries [ DONE ]

Preparing Glance entries [ DONE ]

Checking if the Cinder server has a cinder-volumes vg[ DONE ]

Preparing Cinder entries [ DONE ]

Preparing Nova API entries [ DONE ]

Creating ssh keys for Nova migration [ DONE ]

Gathering ssh host keys for Nova migration [ DONE ]

Preparing Nova Compute entries [ DONE ]

Preparing Nova Scheduler entries [ DONE ]

Preparing Nova VNC Proxy entries [ DONE ]

Preparing OpenStack Network-related Nova entries [ DONE ]

Preparing Nova Common entries [ DONE ]

Preparing Neutron LBaaS Agent entries [ DONE ]

Preparing Neutron API entries [ DONE ]

Preparing Neutron L3 entries [ DONE ]

Preparing Neutron L2 Agent entries [ DONE ]

Preparing Neutron DHCP Agent entries [ DONE ]

Preparing Neutron Metering Agent entries [ DONE ]

Checking if NetworkManager is enabled and running [ DONE ]

Preparing OpenStack Client entries [ DONE ]

Preparing Horizon entries [ DONE ]

Preparing Heat entries [ DONE ]

Preparing Heat CloudFormation API entries [ DONE ]

Preparing Gnocchi entries [ DONE ]

Preparing Redis entries [ DONE ]

Preparing Ceilometer entries [ DONE ]

Preparing Aodh entries [ DONE ]

Preparing Puppet manifests [ DONE ]

Copying Puppet modules and manifests [ DONE ]

Applying 192.168.0.120_controller.pp

192.168.0.120_controller.pp: [ DONE ]

Applying 192.168.0.120_network.pp

192.168.0.120_network.pp: [ DONE ]

Applying 192.168.0.121_compute.pp

192.168.0.121_compute.pp: [ DONE ]

Applying Puppet manifests [ DONE ]

Finalizing [ DONE ]

**** Installation completed successfully ******

Additional information:

* File /root/keystonerc_admin has been created on OpenStack client host 192.168.0.120. To use the command line tools you need to source the file.

* NOTE : A certificate was generated to be used for ssl, You should change the ssl certificate configured in /etc/httpd/conf.d/ssl.conf on 192.168.0.120 to use a CA signed cert.

* To access the OpenStack Dashboard browse to https://192.168.0.120/dashboard .

Please, find your login credentials stored in the keystonerc_admin in your home directory.

* The installation log file is available at: /var/tmp/packstack/20191110-214111-UMIIGA/openstack-setup.log

* The generated manifests are available at: /var/tmp/packstack/20191110-214111-UMIIGA/manifests

Now assuming you don't face any issues during the multi node OpenStack

deployment on Oracle VirtualBox, at the end of Packstack utility exit,

you will get the OpenStack

Dashboard (Horizon) URL and keystonerc file location.

Errors observed during multi node OpenStack deployment

I also faced few issues while trying to install multi node OpenStack on VirtualBox using Packstack utility.

Error 1: Could not evaluate: Cannot allocate memory - fork(2)

During my initial run I got the below error while trying to install multi node OpenStack on VirtualBox using Packstack

ERROR : Error appeared during Puppet run: 192.168.0.120_controller.pp

Error: /Stage[main]/Neutron::Plugins::Ml2::Ovn/Package[python-networking-ovn]: Could not evaluate: Cannot allocate memory - fork(2)

This is observed if the system is not able to create a new process(es) anymore or there is not an available ID to assign to the new process.

Increase the value of kernel.pid_max to 65534:

[root@server1 ~]# echo kernel.pid_max = 65534 >> /etc/sysctl.conf

[root@server1 ~]# sysctl -p

Verify the new value

[root@server1 ~]# sysctl -a | grep kernel.pid_max

kernel.pid_max = 65534

Set the value for max number of open files and max number of processes

to unlimited in /etc/security/limits.conf file:

[root@server1 ~]# cat /etc/security/limits.conf

root soft nproc unlimited

root soft nofile unlimited

Error 2: Failed to apply catalog: Cannot allocate memory - fork(2)

The other error was also similar to the above one, here the problem was my CentOS 7 VM on Windows 10 Laptop was running on very less memory.

ERROR : Error appeared during Puppet run: 192.168.0.120_controller.pp

Error: Failed to apply catalog: Cannot allocate memory - fork(2)

Initially I had given only 4GB to this CentOS 7 VM due to which the execution was failing. Later I increased the memory for my CentOS 7 VM to 8GB. Although as per Red Hat the recommendation is to have atleast 16GB Memory to setup Openstack in virtual environment.

Error 3: Couldn't detect ipaddress of interface eth1 on node 192.168.0.120

Here I got this error at the very initial stage of multi node OpenStack deployment with Packstack on my CentOS 7 controller node.

Couldn't detect ipaddress of interface eth1 on node 192.168.0.120

The cause for this was that my eth1 was not having any IP Address. The

internal network on my Windows 10 laptop was not properly setup so the

DHCP IP was not received by the eth1 interface. It is important that

eth1 has a valid IP Address before we start Packstack to install multi

node OpenStack on VirtualBox.

Verify OpenStack Installation

Next there are couple of checks which must be performed to make sure we were successfully able to install multi node OpenStack on VirtualBox using Packstack.

Verify status of OpenStack Services on controller (server1)

Now to make sure we are successfully able to install multi node

OpenStack on VirtualBox, we should check the status of OpenStack

services. But as you know in OpenStack we do not have any single service

instead it is a combination of multiple services. So either you go ahead

and check individual service status or we can use openstack-status

By default this tool is not

available on the node. You can get this tool by installing

openstack-utils rpm

[root@server1 ~]# yum -y install openstack-utils

Next check the OpenStack service status on Controller node

[root@server1 ~]# openstack-status

== Nova services ==

openstack-nova-api: active

openstack-nova-compute: inactive (disabled on boot)

openstack-nova-network: inactive (disabled on boot)

openstack-nova-scheduler: active

openstack-nova-conductor: active

openstack-nova-console: inactive (disabled on boot)

openstack-nova-consoleauth: active

openstack-nova-xvpvncproxy: inactive (disabled on boot)

== Glance services ==

openstack-glance-api: active

openstack-glance-registry: active

== Keystone service ==

openstack-keystone: inactive (disabled on boot)

== Horizon service ==

openstack-dashboard: 301

== neutron services ==

neutron-server: active

neutron-dhcp-agent: active

neutron-l3-agent: active

neutron-metadata-agent: active

neutron-openvswitch-agent: active

== Cinder services ==

openstack-cinder-api: active

openstack-cinder-scheduler: active

openstack-cinder-volume: active

openstack-cinder-backup: active

== Ceilometer services ==

openstack-ceilometer-api: inactive (disabled on boot)

openstack-ceilometer-central: active

openstack-ceilometer-compute: inactive (disabled on boot)

openstack-ceilometer-collector: inactive (disabled on boot)

openstack-ceilometer-notification: active

== Heat services ==

openstack-heat-api: active

openstack-heat-api-cfn: active

openstack-heat-api-cloudwatch: inactive (disabled on boot)

openstack-heat-engine: active

== Support services ==

openvswitch: active

dbus: active

target: active

rabbitmq-server: active

memcached: active

== Keystone users ==

Warning keystonerc not sourced

Verify status of OpenStack Services on compute (server2)

Similarly on the compute node verify the status of nova compute. Currently we see most of the services are inactive but that is fine as we will work on them in our upcoming articles.

[root@server2 ~]# openstack-status

== Nova services ==

openstack-nova-api: inactive (disabled on boot)

openstack-nova-compute: active

openstack-nova-network: inactive (disabled on boot)

openstack-nova-scheduler: inactive (disabled on boot)

== neutron services ==

neutron-server: inactive (disabled on boot)

neutron-dhcp-agent: inactive (disabled on boot)

neutron-l3-agent: inactive (disabled on boot)

neutron-metadata-agent: inactive (disabled on boot)

neutron-openvswitch-agent: active

== Ceilometer services ==

openstack-ceilometer-api: inactive (disabled on boot)

openstack-ceilometer-central: inactive (disabled on boot)

openstack-ceilometer-compute: inactive (disabled on boot)

openstack-ceilometer-collector: inactive (disabled on boot)

== Support services ==

openvswitch: active

dbus: active

Warning novarc not sourced

Verify cinder-volumes volumegroup

We has also given the volume group detail of our cinder-volumes VG which you can see is now used by our OpenStack for cinder storage

[root@server1 ~]# vgs

VG #PV #LV #SN Attr VSize VFree

centos 1 2 0 wz--n- <19.00g 0

cinder-volumes 1 1 0 wz--n- <10.00g 484.00m

We now have a new logical volume (cinder-volumes-pool) which was created by packstack utility.

[root@server1 ~]# lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

root centos -wi-ao---- <18.00g

swap centos -wi-ao---- 1.00g

cinder-volumes-pool cinder-volumes twi-a-tz-- 9.50g 0.00 10.58

Verify Networking on server1 (controller)

Now we can see there are some additional configuration added on our Linux box. This is similar to what we had added to our Packstack answer file.

[root@server1 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:f6:20:06 brd ff:ff:ff:ff:ff:ff

inet 192.168.0.120/24 brd 192.168.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fef6:2006/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP group default qlen 1000

link/ether 08:00:27:4b:7a:80 brd ff:ff:ff:ff:ff:ff

inet6 fe80::a00:27ff:fe4b:7a80/64 scope link

valid_lft forever preferred_lft forever

6: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether c2:aa:61:37:ec:1e brd ff:ff:ff:ff:ff:ff

7: br-eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether 08:00:27:4b:7a:80 brd ff:ff:ff:ff:ff:ff

inet 10.10.10.2/24 brd 10.10.10.255 scope global br-eth1

valid_lft forever preferred_lft forever

inet6 fe80::a8de:6fff:fead:b145/64 scope link

valid_lft forever preferred_lft forever

8: br-ex: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether 36:b4:50:57:84:4e brd ff:ff:ff:ff:ff:ff

inet6 fe80::34b4:50ff:fe57:844e/64 scope link

valid_lft forever preferred_lft forever

9: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether ae:8c:6f:e8:19:4a brd ff:ff:ff:ff:ff:ff

10: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 4a:78:bc:22:b7:45 brd ff:ff:ff:ff:ff:ff

Here we don't see any IP

Address assigned to br-ex interface which we will fix in the next

steps.

Verify Networking on server2 (compute)

Similarly check the network configuration on server2, here also we don't see br-eth1 interface which we will have to create to create a bridge network between controller and compute.

[root@server2 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:49:67:6f brd ff:ff:ff:ff:ff:ff

inet 192.168.0.121/24 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe49:676f/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:51:81:1c brd ff:ff:ff:ff:ff:ff

inet 10.10.10.3/24 brd 10.10.10.255 scope global noprefixroute eth1

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe51:811c/64 scope link

valid_lft forever preferred_lft forever

4: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 02:55:bb:65:92:fd brd ff:ff:ff:ff:ff:ff

5: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 2a:9d:8e:e6:37:41 brd ff:ff:ff:ff:ff:ff

6: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 8a:8a:57:05:f6:40 brd ff:ff:ff:ff:ff:ff

7: vxlan_sys_4789: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 65000 qdisc noqueue master ovs-system state UNKNOWN group default qlen 1000

link/ether ca:3a:76:33:4b:a0 brd ff:ff:ff:ff:ff:ff

inet6 fe80::c83a:76ff:fe33:4ba0/64 scope link

valid_lft forever preferred_lft forever

Here also we have some more configuration to be done for the network bridge.

Configure OpenStack Networking on server1 (controller)

My eth0 interface is mapped to ifcfg-eth0 configuration file under

/etc/sysconfig/network-scripts (which is my primary interface

connected to external network). So I need to make some modification to

this interface file and also create an external bridge network interface

config file.

[root@server1 ~]# cd /etc/sysconfig/network-scripts/

Copy the content of ifcfg-eth0 to create a new file ifcfg-br-ex

under /etc/sysconfig/network-scripts/

[root@server1 network-scripts]# cp ifcfg-eth0 ifcfg-br-ex

Next take backup of your ifcfg-eth0 configuration file just in case

you make some mistake you will have something to rollback.

[root@server1 network-scripts]# cp ifcfg-eth0 /tmp/ifcfg-eth0.bkp

Below is my final content of both the configuration file. You can modify it accordingly.

[root@server1 network-scripts]# cat ifcfg-br-ex

TYPE=OVSBridge

BOOTPROTO=static

IPADDR=192.168.0.120

PREFIX=24

GATEWAY=192.168.0.1

DNS1=8.8.8.8

DEVICE=br-ex

ONBOOT=yes

PEERDNS=yes

USERCTL=yes

DEVICETYPE=ovs

[root@server1 network-scripts]# cat ifcfg-eth0

BOOTPROTO=none

TYPE=OVSPort

OVS_BRIDGE=br-ex

ONBOOT=yes

DEVICETYPE=ovs

DEVICE=eth0

Next reboot the server, post reboot if everything is good then you should have proper network configuration

Post reboot verify your network address

[root@server1 ~]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP group default qlen 1000

link/ether 08:00:27:f6:20:06 brd ff:ff:ff:ff:ff:ff

inet6 fe80::a00:27ff:fef6:2006/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP group default qlen 1000

link/ether 08:00:27:4b:7a:80 brd ff:ff:ff:ff:ff:ff

inet6 fe80::a00:27ff:fe4b:7a80/64 scope link

valid_lft forever preferred_lft forever

4: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 02:06:46:ba:95:bb brd ff:ff:ff:ff:ff:ff

5: vxlan_sys_4789: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 65000 qdisc noqueue master ovs-system state UNKNOWN group default qlen 1000

link/ether ce:56:11:ad:10:87 brd ff:ff:ff:ff:ff:ff

inet6 fe80::cc56:11ff:fead:1087/64 scope link

valid_lft forever preferred_lft forever

6: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 4a:78:bc:22:b7:45 brd ff:ff:ff:ff:ff:ff

7: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether ae:8c:6f:e8:19:4a brd ff:ff:ff:ff:ff:ff

8: br-eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether 08:00:27:4b:7a:80 brd ff:ff:ff:ff:ff:ff

inet 10.10.10.2/24 brd 10.10.10.255 scope global br-eth1

valid_lft forever preferred_lft forever

inet6 fe80::c0f1:57ff:fe5c:3346/64 scope link

valid_lft forever preferred_lft forever

9: br-ex: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether 08:00:27:f6:20:06 brd ff:ff:ff:ff:ff:ff

inet 192.168.0.120/24 brd 192.168.0.255 scope global br-ex

valid_lft forever preferred_lft forever

inet6 fe80::ac01:68ff:fea0:e46/64 scope link

valid_lft forever preferred_lft forever

So now our br-ex and br-eth1 interface has the IP from eth0 and eth1

interface respectively.

Check the default gateway

[root@server1 network-scripts]# ip route show

default via 192.168.0.1 dev br-ex

10.10.10.0/24 dev br-eth1 proto kernel scope link src 10.10.10.2

169.254.0.0/16 dev eth0 scope link metric 1002

169.254.0.0/16 dev eth1 scope link metric 1003

169.254.0.0/16 dev br-eth1 scope link metric 1016

169.254.0.0/16 dev br-ex scope link metric 1017

192.168.0.0/24 dev br-ex proto kernel scope link src 192.168.0.120

Configure OpenStack Networking on server2 (compute)

To install multi node OpenSTack on VirtualBox, configure Internal

network bridge on br-eth1 on server2

[root@server2 network-scripts]# cat ifcfg-eth1

DEVICE=eth1

NAME=eth1

DEVICETYPE=ovs

TYPE=OVSPort

OVS_BRIDGE=br-eth1

ONBOOT=yes

BOOTPROTO=none

[root@server2 network-scripts]# cat ifcfg-br-eth1

DEFROUTE=yes

NAME=eth1

ONBOOT=yes

DEVICE=br-eth1

DEVICETYPE=ovs

OVSBOOTPROTO=none

TYPE=OVSBridge

IPADDR=10.10.10.3

PREFIX=24

Next reboot the server, post reboot verify the networking on server2

[root@server2 network-scripts]# ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:49:67:6f brd ff:ff:ff:ff:ff:ff

inet 192.168.0.121/24 brd 192.168.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fe49:676f/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP group default qlen 1000

link/ether 08:00:27:51:81:1c brd ff:ff:ff:ff:ff:ff

inet6 fe80::a00:27ff:fe51:811c/64 scope link

valid_lft forever preferred_lft forever

4: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 6e:35:20:83:b4:75 brd ff:ff:ff:ff:ff:ff

5: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 8a:8a:57:05:f6:40 brd ff:ff:ff:ff:ff:ff

6: vxlan_sys_4789: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 65000 qdisc noqueue master ovs-system state UNKNOWN group default qlen 1000

link/ether d6:af:1e:ca:b4:d2 brd ff:ff:ff:ff:ff:ff

inet6 fe80::d4af:1eff:feca:b4d2/64 scope link

valid_lft forever preferred_lft forever

7: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 2a:9d:8e:e6:37:41 brd ff:ff:ff:ff:ff:ff

9: br-eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether 08:00:27:51:81:1c brd ff:ff:ff:ff:ff:ff

inet 10.10.10.3/24 brd 10.10.10.255 scope global br-eth1

valid_lft forever preferred_lft forever

inet6 fe80::8070:56ff:fea5:348/64 scope link

valid_lft forever preferred_lft forever

For POC purpose this setup should be enough to demonstrate steps to install multi node OpenStack on VirtualBox using Packstack , there are many other topics related to OpenStack which I will cover in the next articles and add the hyperlink here.

Lastly I hope the steps from the article to install multi node OpenStack on VirtualBox using CentOS 7 Linux was helpful. So, let me know your suggestions and feedback using the comment section.

![[SOLVED] Mount multiple K8 secrets to same directory](/k8-mount-multiple-secrets-to-same-directory/kubernetes_mount_secrets_hu_a19bb328e53973dd.webp)

![Deploy Openstack using Kolla Ansible [Step-by-Step]](/deploy-openstack-using-kolla-ansible/kolla_ansible_hu_d6215fe82277dee6.webp)

![Terraform: EKS Cluster Provision on AWS [10 Steps]](/terraform-eks-example/terraform_eks_hu_fe5bb82723d58e02.webp)