In this Kubernetes Tutorial we will use deployments to perform RollingUpdate our application running inside the Pod using Kubernetes Deployments. This section covers how to update apps running in a Kubernetes cluster and how Kubernetes helps you move toward a true zero-downtime update process.

Although this can be achieved using only

ReplicationControllers or ReplicaSets, Kubernetes also provides Deployment resource that

sits on top of ReplicaSets and enables declarative application

updates.

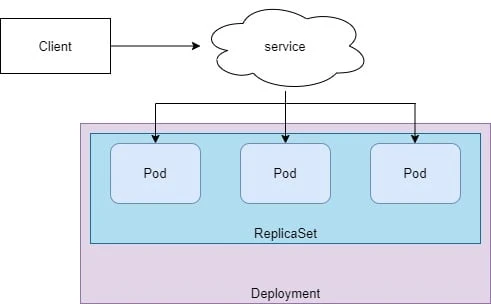

Overview on Kubernetes Deployment

- When running Pods in datacenter, additional features may be needed such as scalability, updates and rollback etc which are offered by Deployments

- A Deployment is a higher-level resource meant for deploying applications and updating them declaratively, instead of doing it through a ReplicationController or a ReplicaSet, which are both considered lower-level concepts.

- When you create a Deployment, a ReplicaSet resource is created underneath. Replica-Sets replicate and manage pods, as well.

- When using a Deployment, the actual pods are created and managed by the Deployment’s ReplicaSets, not by the Deployment directly

Create Kubernetes Deployment resource

In the deployment spec, following properties are managed:

- replicas: explains how many copies of each Pod should be running

- strategy: explains how Pods should be updated

- selector: uses matchLabels to identify how labels are matched against the Pod

- template: contains the pod specification and is used in a deployment to create Pods

Since we do not have any deployment, so we don't have a template to create one. Now if you are familiar with all the syntax used with YAML file to create a deployment then you do not need this step.

But for beginners (there is a trick), you don't need to memorize the

entire structure of the YAML file, instead we will create a new

deployment using kubectl using --dry-run so that actually a

deployment is not created but just verified. In this process of

verification we will store the output of deployment into a YAML file and

then we will modify that YAML file as per our requirement to create a

new deployment.

So, to create a dummy deployment we use:

[root@controller ~]# kubectl create deployment nginx-deploy --image=nginx --dry-run=client -o yaml > nginx-deploy.yml

This would create a new YML file with following content (I will remove the highlighted content as those are not required at the moment):

[root@controller ~]# cat nginx-deploy.yml

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: nginx-deploy

name: nginx-deploy

spec:

replicas: 1

selector:

matchLabels:

app: nginx-deploy

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: nginx-deploy

spec:

containers:

- image: nginx

name: nginx

resources: {}

status: {}

Now we have a basic template required to create a deployment, and since

we used --dry-run in the previous command, no deployment was

actually created which you can verify:

[root@controller ~]# kubectl get deployments

No resources found in default namespace.

We can modify the content of nginx-deploy.yml as we don't need many of

the properties from this file and we will also use label type: dev

instead of the existing label and have 2 replicas, following is my YAML

file after making the changes:

[root@controller ~]# cat nginx-deploy.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

type: dev

name: nginx-deploy

spec:

replicas: 2

selector:

matchLabels:

type: dev

template:

metadata:

labels:

type: dev

spec:

containers:

- image: nginx

name: nginx

Next we will create our deployment and verify the status:

[root@controller ~]# kubectl create -f nginx-deploy.yml

deployment.apps/nginx-deploy created

[root@controller ~]# kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deploy 2/2 1 2 3s

Check the status of the deployment rollout

You can use the usual kubectl get deployment and the

kubectl describe deployment commands to see details of the Deployment,

but there is a dedicated command, which is made specifically for

checking a Deployment’s status:

[root@controller ~]# kubectl rollout status deployment nginx-deploy

deployment "nginx-deploy" successfully rolled out

According to this, the Deployment has been successfully rolled out, so you should see the three pod replicas up and running. Let’s see:

[root@controller ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx 1/1 Running 5 4d1h

nginx-deploy-d98cc8bdb-48ppw 1/1 Running 0 80s

nginx-deploy-d98cc8bdb-nvcb5 1/1 Running 0 80s

To check more details on individual Pod you can use kubectl describe:

[root@controller ~]# kubectl describe Pod nginx-deploy-d98cc8bdb-48ppw

Name: nginx-deploy-d98cc8bdb-48ppw

Namespace: default

Priority: 0

Node: worker-1.example.com/192.168.43.49

Start Time: Wed, 02 Dec 2020 13:05:01 +0530

Labels: pod-template-hash=d98cc8bdb

type=dev

Annotations: <none>

Status: Running

IP: 10.36.0.2

IPs:

IP: 10.36.0.2

Controlled By: ReplicaSet/nginx-deploy-d98cc8bdb

...

Understanding the naming syntax of Pods part of Deployment

Earlier,

when we used a ReplicaSet to create pods, their names were composed of

the name of the controller plus a randomly generated string (for

example, nginx-v1-m33mv). The two pods created by the Deployment

include an additional numeric value in the middle of their names. What

is that exactly?

The number corresponds to the hashed value of the pod template in the

Deployment and the ReplicaSet managing these pods. As we said

earlier, a Deployment doesn’t

manage pods directly. Instead, it creates ReplicaSets and leaves the

managing to them, so let’s look at the ReplicaSet created by your

Deployment:

[root@controller ~]# kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-deploy-d98cc8bdb 2 2 2 6m17s

The ReplicaSet’s name also contains the hash value of its pod template. Using the hash value of the pod template like this allows the Deployment to always use the same (possibly existing) ReplicaSet for a given version of the pod template.

Using Kubernetes RollingUpdate

Initially, the pods run the first version of your application, let’s suppose its image is tagged as v1. You then develop a newer version of the app and push it to an image repository as a new image, tagged as v2. You’d next like to replace all the pods with this new version.

You have two ways of updating all those pods. You can do one of the following:

- Recreate: Delete all existing pods first and then start the new ones. This will lead to a temporary unavailability.

- Rolling Update: Updates Pod one at a time to guarantee availability of the application. This is the preferred approach and you can further tune its behaviour.

The RollingUpdate options are used to guarantee a certain minimal and

maximal amount of Pods to be always available:

- maxUnavailable: The maximum number of Pods that can be unavailable

during updating. The value could be a percentage (the default is 25%)

or an integer. If the value of

maxSurgeis 0, which means no tolerance of the number of Pods over the desired number, the value ofmaxUnavailablecannot be 0. - maxSurge: The maximum number of Pods that can be created over the

desired number of

ReplicaSetduring updating. The value could be a percentage (the default is 25%) or an integer. If the value ofmaxUnavailableis 0, which means the number of serving Pods should always meet the desired number, the value ofmaxSurgecannot be 0

I will create a new deployment to demonstrate RollingUpdate, for which

I will copy the YAML template file from one of my existing deployments:

[root@controller ~]# vim rolling-nginx.yml

Next I will make required changes and add a strategy for

RollingUpdate:

[root@controller ~]# cat rolling-nginx.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: rolling-nginx

spec:

replicas: 4

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

selector:

matchLabels:

app: rolling-nginx

template:

metadata:

labels:

app: rolling-nginx

spec:

containers:

- name: nginx

image: nginx:1.9

Here we want four replicas and we have set the update strategy to

RollingUpdate. The MaxSurge value is 1, which is the maximum above

the desired number of replicas, while maxUnvailable is 1. This means

that throughout the process, we should have at least 3 and a maximum of

5 running pods. Also for the sake of example I have explicitly defined a

very old version of nginx image to be used so that we can update it in

the next section to the latest one using RollingUpdate:

Let me go ahead and create this deployment:

[root@controller ~]# kubectl create -f rolling-nginx.yml

deployment.apps/rolling-nginx created

List and verify the newly created pods:

[root@controller ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

... ... ... ... ...

rolling-nginx-74cf96d8bb-6f54x 0/1 ContainerCreating 0 43s

rolling-nginx-74cf96d8bb-dmkqj 0/1 ContainerCreating 0 43s

rolling-nginx-74cf96d8bb-gmqjz 0/1 ContainerCreating 0 43s

rolling-nginx-74cf96d8bb-phkth 0/1 ContainerCreating 0 43s

So we have 4 new pods and currently containers are getting created for these pods. We check the status of the deployments in few seconds and all the 4 pods are in Ready state:

[root@controller ~]# kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

lab-nginx 2/2 2 2 4h9m

label-nginx-example 2/2 2 2 5h12m

nginx-deploy 2/2 2 2 27h

rolling-nginx 4/4 4 4 107s

To get the list of event for a pod we can use a field-selector:

[root@controller ~]# kubectl get event --field-selector involvedObject.name=rolling-nginx-74cf96d8bb-6f54x

LAST SEEN TYPE REASON OBJECT MESSAGE

5m21s Normal Scheduled pod/rolling-nginx-74cf96d8bb-6f54x Successfully assigned default/rolling-nginx-74cf96d8bb-6f54x to worker-2.example.com

5m19s Normal Pulling pod/rolling-nginx-74cf96d8bb-6f54x Pulling image "nginx:1.9"

4m13s Normal Pulled pod/rolling-nginx-74cf96d8bb-6f54x Successfully pulled image "nginx:1.9" in 1m6.412630691s

4m13s Normal Created pod/rolling-nginx-74cf96d8bb-6f54x Created container nginx

4m13s Normal Started pod/rolling-nginx-74cf96d8bb-6f54x Started container nginx

So the containers have started with nginx:1.9 version of image.

Check rollout history

At this stage we have discussed about how kubectl can manage updates

of an application with no downtime but you must be wondering how these

updates are actually rolled out? The answer is using deployment history.

Every time you modify the deployment, a revision history is stored for

the respective modification. So you can use these revision ID to either

perform update or rollback to last revision.

Let's take an example, here if I check the deployment history for the newly created deployment:

[root@controller ~]# kubectl rollout history deployment rolling-nginx

deployment.apps/rolling-nginx

REVISION CHANGE-CAUSE

1 <none>

Since we have not done any changes to this deployment yet, there is only a single revision history.

But why CHANGE-CAUSE is showing NONE? It is because we have not used

--record while creating our deployment. The --record argument will add

a add the command under CHANGE-CAUSE for each revision history

So, I will delete this deployment and create the same again:

[root@controller ~]# kubectl delete deployment rolling-nginx

deployment.apps "rolling-nginx" deleted

and this time I will use --record along with kubectl create:

[root@controller ~]# kubectl create -f rolling-nginx.yml --record

deployment.apps/rolling-nginx created

Now verify the revision history, this time the command for the first revision is recorded:

[root@controller ~]# kubectl rollout history deployment rolling-nginx

deployment.apps/rolling-nginx

REVISION CHANGE-CAUSE

1 kubectl create --filename=rolling-nginx.yml --record=true

Modify the deployment and initiate the update

Let us modify the deployment to verify our RollingUpdate, if you

remember we had used a very old nginx image for our containers so we

will update the image details to use a different nginx image:

[root@controller ~]# kubectl set image deployment rolling-nginx nginx=nginx:1.15 --record

deployment.apps/rolling-nginx image updated

Here, I have just updated the image section to use nginx:1.15 instead

of 1.9, alternatively you can also use kubectl edit to edit the YAML

file of the deployment.

Monitor the rolling update status

Now as soon as you modify the pod template, the update starts automatically. To monitor the rollout status you can use:

[root@controller ~]# kubectl rollout status deployment rolling-nginx

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 3 of 4 updated replicas are available...

deployment "rolling-nginx" successfully rolled out

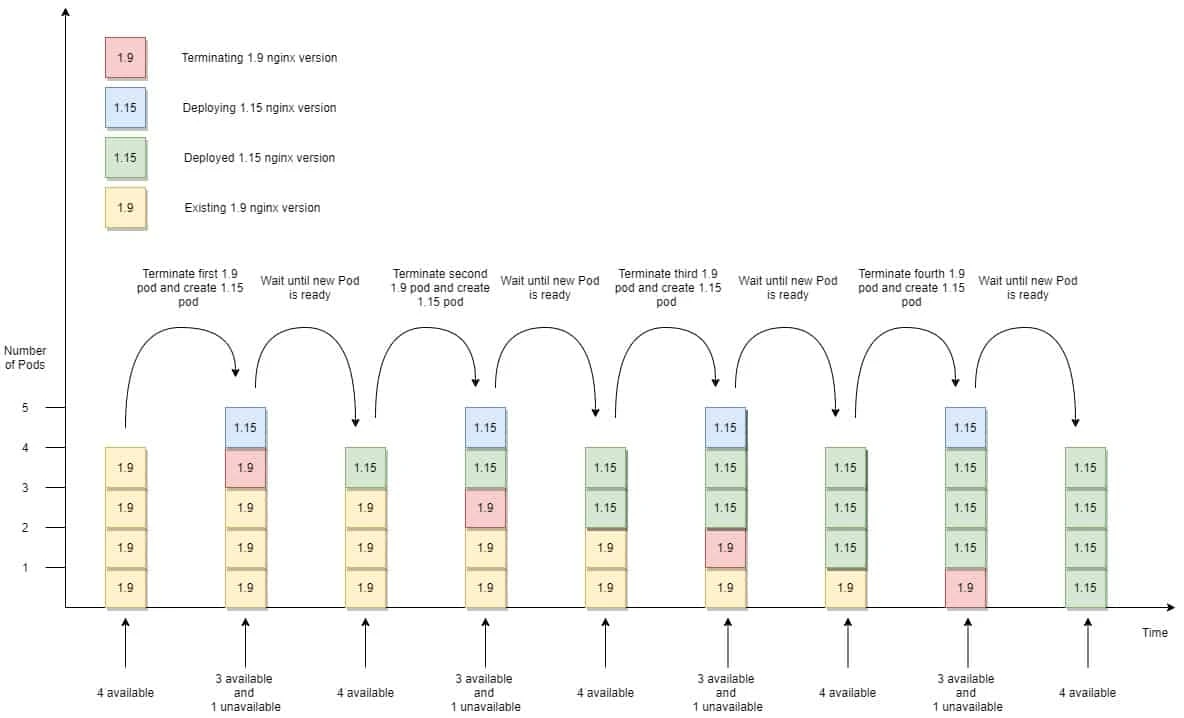

Here is a diagram which explains the rolling update procedure:

So, what is happening here?

- In this case, one replica can be unavailable, so since the desired replica count is 4, only 3 of them need to be available.

- That’s why the rollout process immediately deletes one pod and creates one new Pod because maxSurge is defined as 1 so anytime we can have a maximum of 4 pods.

- This ensures 3 pods are available at minimum and that the maximum number of pods isn’t exceeded.

- As soon as the new pod is available, the next pod is terminated and a new pod is started with the updated image.

- This will continue until all the pods are running with updated nginx image.

Pause and Resume rollout process

Assuming you want to verify the update on certain pods before updating your entire deployment. In such case you can pause the rollout process using following syntax:

kubectl rollout pause deployment rolling-nginx <deployment-name>

I will again modify my deployment's image to use nginx 1.16 (At this stage open another terminal of your controller so that you can monitor the status of the deployment):

[root@controller ~]# kubectl set image deployment rolling-nginx nginx=nginx:1.16 --record

deployment.apps/rolling-nginx image updated

Now I will immediately pause the rollout process:

[root@controller ~]# kubectl rollout pause deployment rolling-nginx

deployment.apps/rolling-nginx paused

On another terminal of the controller node, I am monitoring the status of the rollout:

[root@controller ~]# kubectl rollout status deployment rolling-nginx

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

..

At this stage only two out of 4 pods are deployed with 1.16 nginx image after which the rollout was pause so now you can perform any validation before you resume the rollout.

Check the list of available pods:

[root@controller ~]# kubectl get pods -l app=rolling-nginx

NAME READY STATUS RESTARTS AGE

rolling-nginx-6dc6fcd44c-46ckr 1/1 Running 0 69m

rolling-nginx-6dc6fcd44c-4bzzj 1/1 Running 0 69m

rolling-nginx-6dc6fcd44c-bhbqb 1/1 Running 0 69m

rolling-nginx-765c4fc67d-cfn6n 1/1 Running 0 3m31s

rolling-nginx-765c4fc67d-dzzrg 1/1 Running 0 3m31s

We have 5 pods in running stage because our maxSurge value is 1. From

the AGE you can figure out the pods which are newly created i.e.

rolling-nginx-765c4fc67d-cfn6n 1/1 Running 0 3m31s

rolling-nginx-765c4fc67d-dzzrg 1/1 Running 0 3m31s

minReadySeconds property which

specifies how long a newly created pod should be ready before the pod is

treated as available. Until the pod is available, the rollout process

will not continue.

Once you are done with the verification, you can resume the rollout using:

[root@controller ~]# kubectl rollout resume deployment rolling-nginx

deployment.apps/rolling-nginx resumed

You can again check the progress of the rollout using the following command which I had left running in another terminal when I had paused the rollout process:

[root@controller ~]# kubectl rollout status deployment rolling-nginx

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment spec update to be observed...

Waiting for deployment spec update to be observed...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 3 of 4 updated replicas are available...

deployment "rolling-nginx" successfully rolled out

Rolling back (undo) an update

Deployments make it easy to roll back to the previously deployed version by telling Kubernetes to undo the last rollout of a Deployment. You can check the revision ID so that you can define the revision ID to which you wish to rollback the deployment:

[root@controller ~]# kubectl rollout history deployment rolling-nginx

deployment.apps/rolling-nginx

REVISION CHANGE-CAUSE

2 kubectl set image deployment rolling-nginx nginx=nginx:1.15 --record=true

3 kubectl set image deployment rolling-nginx nginx=nginx:1.16 --record=true

Here in my case there are 2 revisions where ID 3 is the latest one so I can rollback to revision 2 where we deployed 1.15 version of nginx image.

[root@controller ~]# kubectl rollout undo deployment rolling-nginx --to-revision=2

deployment.apps/rolling-nginx rolled back

Monitor the status of the rolling back:

[root@controller ~]# kubectl rollout status deployment rolling-nginx

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 2 out of 4 new replicas have been updated...

Waiting for deployment "rolling-nginx" rollout to finish: 3 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

Waiting for deployment "rolling-nginx" rollout to finish: 1 old replicas are pending termination...

deployment "rolling-nginx" successfully rolled out

Conclusion

In this Kubernetes Tutorial we learned about updating applications in

the Pod using RollingUpdate strategy with Deployments. To summarise

what we learned:

- Create Deployments instead of lower-level ReplicationControllers or ReplicaSets

- Update your pods by editing the pod template in the Deployment specification

- Roll back a Deployment either to the previous revision or to any earlier revision still listed in the revision history

- Pause a Deployment to inspect how a single instance of the new version behaves in production before allowing additional pod instances to replace the old ones

- Control the rate of the rolling update through

maxSurgeandmaxUnavailableproperties - Use

minReadySecondsand readiness probes to have the rollout of a faulty version blocked automatically

![[SOLVED] Mount multiple K8 secrets to same directory](/k8-mount-multiple-secrets-to-same-directory/kubernetes_mount_secrets_hu_a19bb328e53973dd.webp)

![Deploy multi-node K8s cluster on Rocky Linux 8 [Step-by-Step]](/deploy-multi-node-k8s-cluster-rocky-linux-8/k8s_cluster_rocky_linux_hu_f9a6e907ac472e0f.webp)